High FPS doesn't mean a smooth visual experience

Modern PCs are capable of rendering beautiful games at high resolutions. The system's graphics card is responsible for drawing each frame of a game and then sending out that information to the monitor, to be displayed on screen. Faster, more powerful graphics cards are able to either draw frames more quickly - leading to a higher frames-per-second metric - or can increase the visual quality by adding in extra effects and detail.

But the path from graphics card to the display isn't without rendering problems. There is often perceivable lag, tearing and stuttering, and these are caused by a mismatch between the frequency at which the graphics card is sending out frames to the monitor and the speed at which the monitor can update the screen and display the requested frames - the card and monitor work independently of one another. Let's take it a step further and examine what happens.

Some frames are easy for the graphics card to compute, others take a little longer, and it's this mix of short-and-long frames that determine overall frame-rate. For example, in a first-person shooting game the frame-rate changes as the hero moves from one environment to another, engages enemies, and fires off armament that requires special effects to be rendered. In short, games have variable frame-rates.

In an ideal situation the monitor's panel would be refreshed as soon as the new frame was ready to be displayed. This would mean the screen refreshing very quickly when dealing with easy-to-render frames and then slowing the refresh pace down when heavy action increases the frame time and consequently reduces the frame-rate. Sounds simple, right? This is, somewhat surprisingly, not what happens in most cases.

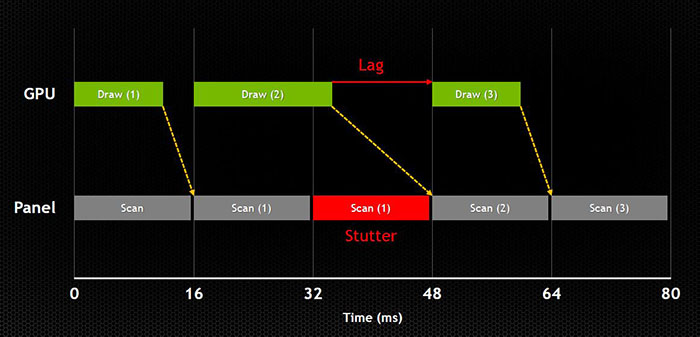

Most monitors ship with a 60Hz refresh rate, and this means the panel can be updated up to 60 times every second, which equates to 60 frames per second and/or 16 milliseconds per frame, depending on how you look on it. If, and it's a big if, a graphics card is able to continually surpass this 60fps barrier then all is good, as the displayed frames, updated very regularly, feel smooth to the human eye, as shown in the graphic above. The graphics card is producing frames that are sub-16ms in every case and the panel is refreshing at the set 16ms (60Hz, 60fps).

Visual problems with low frame-rates

But monitor technology hasn't radically evolved in the last 25 years. The screen can only be refreshed at set intervals - a by-product of using cathode-ray tube (CRT) monitors during the PC's infancy - and this rigid update schedule, which comes into play once the frame-rate has dropped below 60fps, is the cause of the visual issues.

Here, the second frame takes a long while for the graphics card to compute - more than 16ms - and even though the game uses a technique called v-sync to smooth-out frames, the result is that the screen has to draw a frame again while it waits for the graphics card to catch up, which it does in frame three. The result? Lag and stutter that's immediately perceivable to the eye.

Switching off v-sync, which means the frames are displayed as soon as they're computed, always causes what is known as tearing when the frame-rate drops below 60fps. This happens because the monitor updates when only part of a frame has been rendered, leading to two sections that look as if the image has been torn. There have been various techniques to address these common problems but none have managed to properly mitigate the mismatch between the graphics card's output and monitor's timing schedule, especially when the frame-rate falls below 60fps. The gamer is left with a choice of either lag and stutter (v-sync on) or tearing (v-sync off).The obvious solution - Nvidia G-Sync

What's needed is a system that instructs the monitor to refresh the screen immediately after the frame has been drawn by the graphics card, especially in the 30-60fps range that, for the reasons outlined above, monitors have difficulty dealing with. Graphics giant Nvidia has debuted such a system, dubbed G-Sync. It enables effective, coordinated communication between the graphics card and monitor by replacing the screen's scaler with an Nvidia-engineered board. Monitors can be fitted with this special piece of hardware in around 15 minutes, the company says, and a raft of G-Sync-enabled monitors is due to hit the market in 2014.

G-Sync works in a surprisingly simple way - it calculates how long the present frame takes to compute and then, crucially, varies the refresh rate of the monitor to match. It works between a minimum of 33.3ms (30fps) and the maximum supported refresh of the display. The key takeaway here is that the graphics card and monitor are both synced up to one another - the monitor doesn't have the limitations imposed by a rigid, fixed-rate scanning routine.

The main benefits are derived when games run at 40-60fps, often considered the sweetspot between frame-rates and high-quality visuals. By dynamically adapting the monitor's output to match the graphics card and only displaying once a frame is complete, Nvidia is able to provide a much smoother gaming experience than with traditional monitors that can be, and often are, afflicted by tearing, stuttering and lag.

Having seen G-Sync in action first-hand, the visual improvement is nothing short of remarkable. The smooth frame-rates delivered by G-Sync are in direct contrast to the juddery and laggy images produced on a normal monitor - and remember, the underlying PC hardware is exactly the same.

G-Sync does a great job in correcting one of the most obvious flaws in PC gaming in the crucial 40-60fps range. But there is a price to pay for such gaming goodness: G-Sync, quite understandably, is only available on Nvidia GeForce cards based on the Kepler architecture - GeForce GTX 650 Ti Boost and above - and monitors equipped with the technology command a $100 premium over non-enabled displays.