Cortex-A75

ARM is today launching two Cortex-A-class processors that push the boundaries of performance farther than ever before, and bringing more intelligent computing capability to the edge or device.

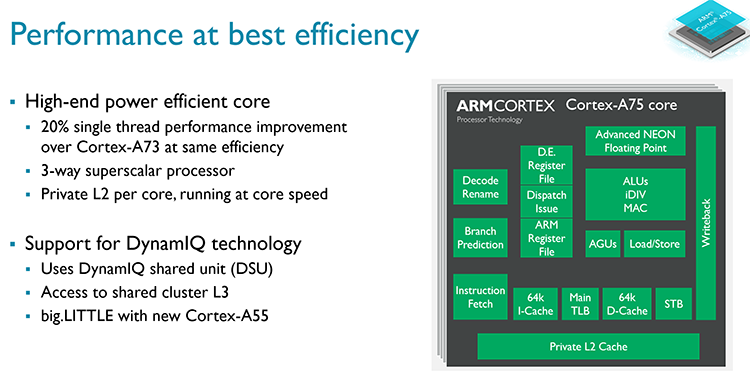

The Cortex-A75 and Cortex-A55 are also significant because they are the first to harness the DynamIQ microarchitecture primed for design versatility, multi-core efficiency and flexibility.

If you have followed ARM's nomenclature of late, the Cortex-A7x processors occupy the premium space and are consequently found in high-end smartphones, tablets, heavy-duty networking, and looking towards the future, for mobile artificial intelligence and machine learning environments.

Cortex-A75 follows in the footsteps of 2015's Cortex-A72 and last year's Cortex-A73, with the latter now shipping in the Huawei Mate 9 and forming the foundations of the Qualcomm Snapdragon 835's CPU.

With the need for more horsepower whilst also maintaining excellent efficiency, Cortex-A75 offers up to 50 per cent more performance than the CPUs in shipping handsets, according to ARM, at similar energy levels. That's a big deal. Cortex-A75 will be present in premium smartphones in 2018, so let's take a look at how ARM has upped its performance mojo.

Architectured to be wider, faster and more efficient

Processor design is an iterative process. Architects take a good look at incumbent designs and then figure out how to tweak them further whilst also bringing in new ways of doing things. Typically, a new processor, such as the Cortex-A75, will have an improved front-end (branch-prediction unit, instruction dispatch), a 'better' core where all the calculations take place, and improved memory bandwidth, management and general throughput that oils the whole process.

The biggest obvious change between generations is ARM's decision to go back to a three-way decode design (same as Cortex-A72) rather than the leaner two-way that was deemed a best-fit case for smartphone-optimised Cortex-A73. This alone gives the Cortex-A75 extra performance artillery. Moving through the engine, instructions, which can be processed out of order, are then pushed through seven independent queues, so wider than anything that has come before, and retired.

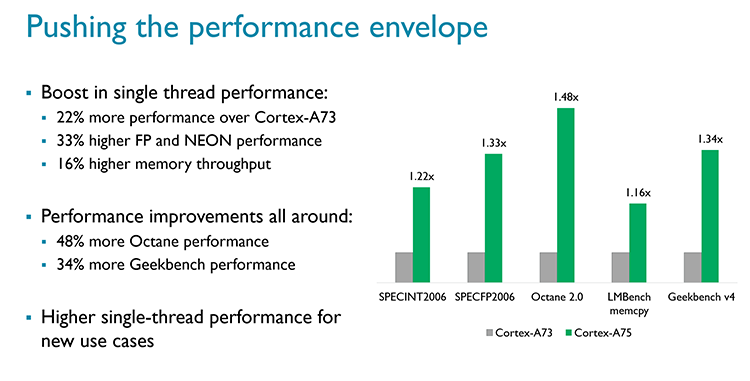

ARM has also recognised that single-threaded performance remains important for a number of present and emerging workloads. Based on incremental improvements in architecture and higher potential frequency, Cortex-A75 offers around 20 per cent extra performance.

It is reasonable to assume that the processor will find its way into premium handsets released next year, most likely at Mobile World Congress, and speeds of up to 3GHz based on 10nm manufacturing.

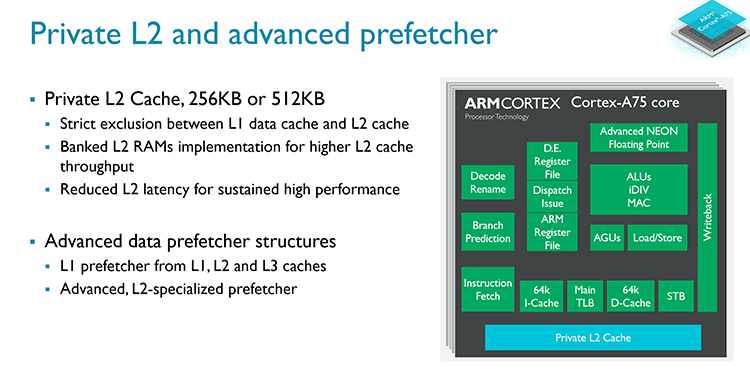

In looking at ways to increase performance without burning through additional power, ARM decided to outfit Cortex-A75 with a private L2 cache, operating at core speed, sitting close to the processing core. This can be adjusted in size to suit the target audience, and the decision to make the cache private means that, in effect, latency is reduced and power consumption kept in check.

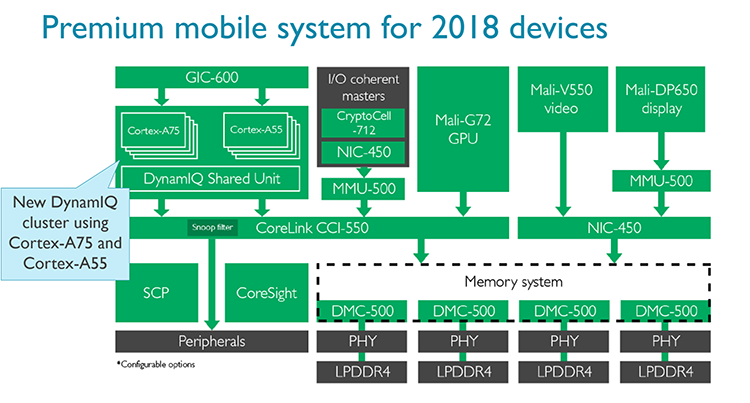

What's more interesting, arguably, is how ARM sees future CPU development. The unified L3 cache has been moved away into a separate part of the SoC called the DynamIQ Shared Unit (DSU).

This DSU serves any chip in a particular cluster, and given that the also-new Cortex-A55 shares the same DynamIQ building blocks, partners are free to choose how they implement L3 cache across a range of processor configurations.

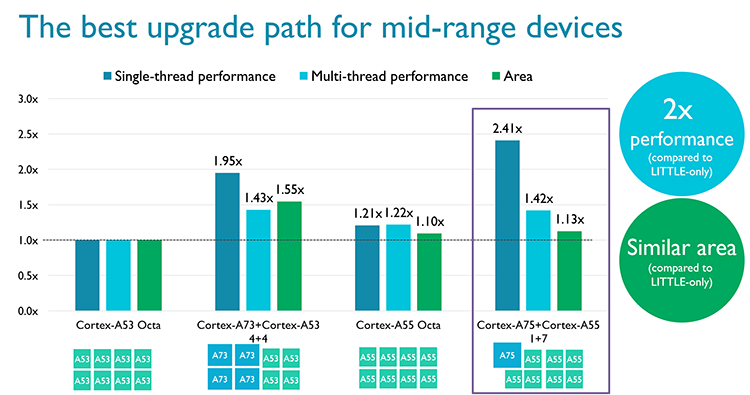

For example, partners can choose to use between one and four high-performance Cortex-A75 cores, or up to eight Cortex-A55 cores, or a combination of both in a DynamIQ big.LITTLE configuration.

The upshot, as shown above, is that partners who build SoCs can finely tune the CPU performance/power/area trade-off that best suits the intended application.

A 1+7 combination of big.LITTLE, based on DynamIQ, uses about the same area as eight Cortex-A53 cores but, thanks to the big Cortex-A75, heaps more single-threaded performance for time-sensitive applications.

In fact, even ARM doesn't know exactly how partners will choose to adopt Cortex-A75 in a big.LITTLE setup, and it will be interesting to see how the SoC guys take on the challenge.

Reading the above, it may seem as if Cortex-A75 has been primed for smartphones alone. That's not the case, as it was for last-generation Cortex-A73, because the new chip has been endowed with additional features that lay the hardware groundwork for new kinds of processing.

As an example, Cortex-A75 has half-precision float capability used in workloads specific to machine learning and artificial intelligence. Cache stashing is added to improve performance when multiple cores are running, there are server-class reliability, availability and serviceability features such as improved error correction and, pushing the performance boat out, improvements to the hypervisor capability one may see in a server environment.

Summary

If you only take away a few nuggets from Cortex-A75, they should be that it is the first high-performance application-class processor from ARM built with nascent DynamIQ technology that makes it simpler to build either identical or big.LITTLE multi-core chips within an SoC. Performance is improved over the previous generation through higher frequency and a wider decode stage, whilst an improved memory subsystem helps in keeping power consumption similar to previous levels.

This isn't a core for smartphones only - ARM believes it has the chops to deliver infrastructure-class performance in a mobile footprint, so it's also designed for the the burgeoning networking market, specialised AI workloads, large-screen devices such as entry-level laptops, and potential opportunity within machine learning.

What we're most intrigued with is how SoC vendors will take advantage of the new big.LITTLE capability enabled by pairing it with the Cortex-A55 via DynamIQ. Read on the following page to see how the Cortex-A55 has under the hood.