A cut in the frame-buffer

AMD and NVIDIA are like siblings who like to quarrel. Both live to outshine each other, so when one's on to a winner, it's inevitable that there's a kneejerk riposte, to take the lustre away from a new launch.

Just recently, NVIDIA released the GeForce GTX 560 Ti graphics card, requesting partners launch models costing in the region of £200. AMD, however, had no appropriate GPU to counter with on a price-to-price basis. Rather, as an immediate response, it engineered a solution in the form of a Radeon HD 6950 GPU that was outfitted with 1,024MB RAM instead of the 2,048MB on the standard model, which currently retails for £220.

Now filtering through to the channel, the £200 Radeon HD 6950 vies for your attention as a mid-to-high-end graphics card with all the goodies in tow.

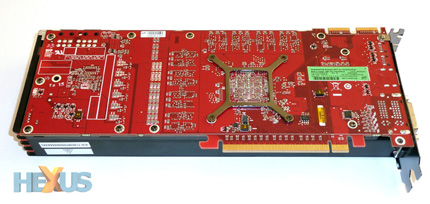

An inspection of the anachronistic ATI-branded card shows that it's strictly reference in design, much like the 6950 2GB we saw back in December. The only meaningful difference between the two cards is the reduced frame-buffer.

The BIOS-toggling switch remains.

Frequencies are slap-bang reference, too, which means the card chimes in with an 800MHz core and 5,000MHz memory, though partners have already launched custom versions of the card - based on existing HD 6950 2GB models - into the channel.

The reduction in frame-buffer size enables a lower street price to be achieved by retailers, but it may come at the expense of performance at high resolutions and high-quality image settings, where having a larger local frame-buffer enables the graphics card to chug along without potentially being suffocated by the huge memory-related considerations that can occur with the noisome combination of resolution and IQ.

Conjecturing somewhat, we expect the Radeon HD 6950 1GB to benchmark very close to the levels of the 2GB-equipped card with monitors whose native settings are 1,680x1,050 and 1,920x1,080 pixels. Folks who own a 2,560x1,600-capable monitor or, more pragmatically, run two or three medium-resolution monitors, side-by-side, in an Eyefinity setup may find the card wanting. But there's only one way to find out, right?